-

New paper out today in a volume edited by Adam Safron and Michael Levin (“World models in natural and artificial intelligence”). “A sentence is worth a thousand pictures: can large language models understand hum4n L4ngu4ge and the W0rld behind W0rds?” (with Evelina Leivada, Gary Marcus, and Fritz Günther) Volume link Paper link

-

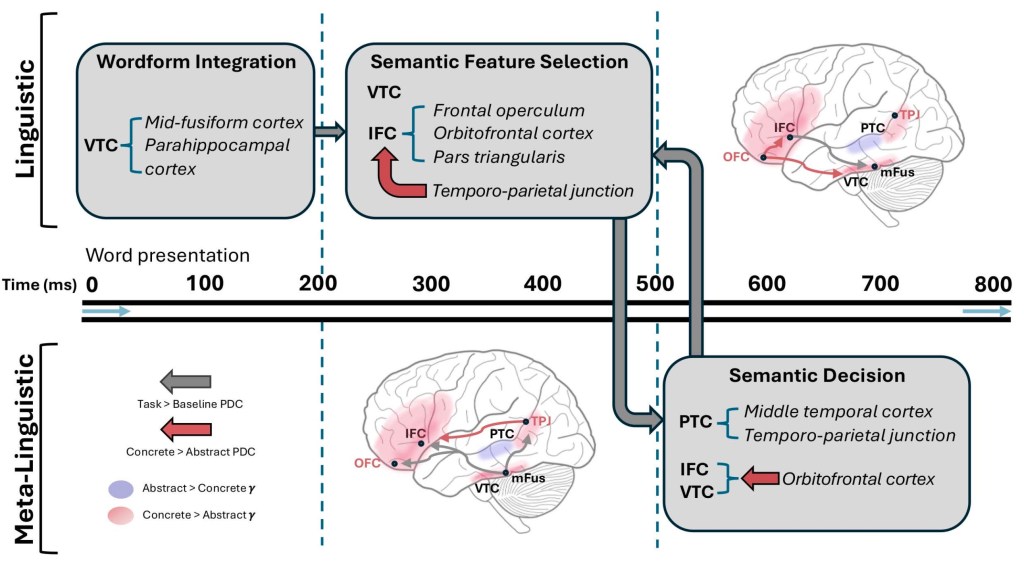

New intracranial EEG paper published in PLOS Biology, exploring single-word concreteness judgments during reading [PDF]: “Neurobiological models of conceptual processing have been limited in spatiotemporal resolution, and uncertainty remains about the causal role of specific regions in concept representation. We utilized intracranial recordings in human neurosurgical patients with epilepsy (n = 19) during a concreteness judgement paradigm…

-

A conversation with Jack Roycroft-Sherry on language evolution, semantics, and AI, on his podcast.

-

A review of Gregory Hickok’s latest book, Wired for Words, which focuses on topics in syntax and semantics. PDF available here, and link to journal here.

-

New paper in Computational Linguistics with Giosuè Baggio critiquing some recent claims about the capacities of LLMs [PDF]: “Mandelkern and Linzen (2024) argue that words generated by language models (LMs) are linked to causal histories of use within human linguistic communities, and ultimately to their referents. Therefore, LMs’ words refer. We qualify Mandelkern and Linzen’s…

-

New paper published in Scientific Reports [PDF here]: Merge-based syntax is mediated by distinct neurocognitive mechanisms in 84,000 individuals with language deficits across nine languages. “In the modern language sciences, the core computational operation of syntax, ‘Merge’, is defined as an operation that combines two linguistic units (e.g., ‘brown’, ‘cat’) to form a categorized constituent…

-

I recently took part in Michael Levin’s ongoing symposium on Platonic spaces in biology. The symposium contains excellent talks from Lauren Ross, Karl Friston, Chris Fields, Iain McGilchrist, and many others. My lecture focused on how we can use formalized algebraic models to narrow the space of candidate neural mechanisms for language. It contains a…

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.